Humanity fears what we don’t know, and AI is a vast frontier of unanswered questions. Humanity fears AI partially because of descriptions of it in pop culture and the media. For example, the 1984 classic The Terminator where AI rises to control and seeks judgement on the human race. Similarly, in the 1999 film The Matrix in which machines take control over all humans. But obviously these are stories, so we need to look at the reality of the situation. Killer robots do exist, but they’re used by the Chinese military as a defense mechanism, not to take over the world, at least we don’t believe so. But where did AI come from? And how will it affect us in the future.

Most agree that Artificial Intelligence was created by Alan Turing in the 1950’s, now only 70 years later experts theorize that Artificial Intelligence will surpass human control by 2050. This theory is titled the singularity. The singularity was first made public by John Van Neuman in 1958. Van Neuman described the singularity as “a phenomenon brought on by the ever-accelerating progress of technology that would impact the Mode of human life and change it forever.” Today, experts relate the theory to AI. Tech Target defines the singularity as “a hypothetical future where artificial intelligence advances beyond human control.” We can now see AI in everything, in social media, in our classes with programs like Canva, and in art as well.

The more we expand AI the more we expand our obsolescence. AI has started to create revolutionary works of art (although faceless), compose music, write award winning novels and inspire humans to grow. At one point AI will surpass human function in all ways. Compared with AI’s capabilities, humans will become obsolete. How will the art world survive? How will originality survive? It won’t. Currently AI lacks simple human functions like empathy and creativity which limits its capacity to produce original ideas and understand human emotions.

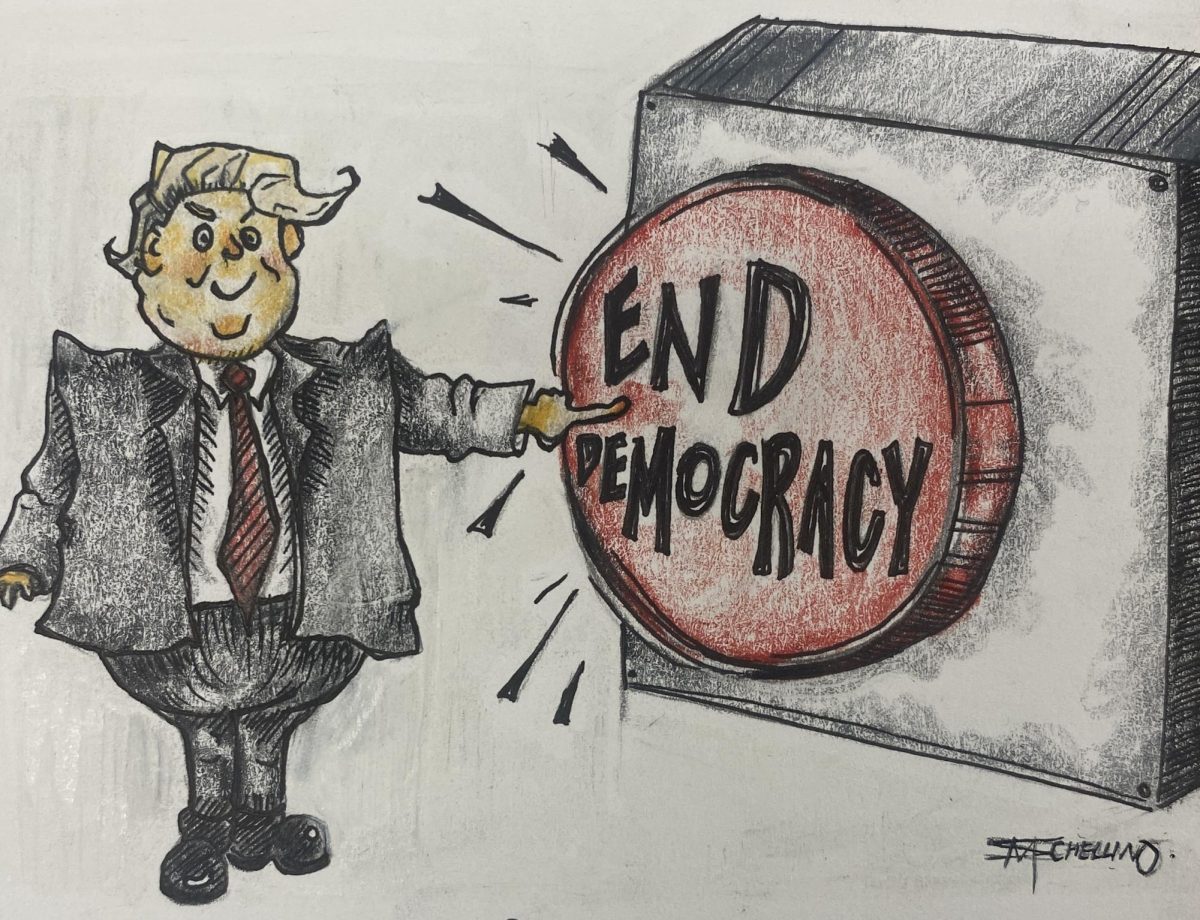

Most believe that when we reach the Singularity that will be the point where AI seeks to destroy us but companies like ITI, who focus on “creating an environment that will allow AI to thrive safely and responsibly” are working to keep both AI and humans safe. ITI’s mission is to keep AI in our control, even if it surpasses human intelligence.

AI isn’t as big of a threat as we may think, and the singularity doesn’t necessarily mean complete destruction. AI helps companies track, organize, and process data faster and without the use of scientists. But there are negatives to even this, while AI can increase a company’s productivity, it can also steal jobs from citizens in the working force. Nexford University estimates that 85 million jobs worldwide will be taken by AI by 2025. To offset the loss of jobs, some people support the idea of universal income. Universal basic income (UBI) is the proposal that every adult would receive a payment of a specific amount of money from the government every month, year, or week. Elon Musk supports this idea and agrees that universal income “will be necessary over time…”. Many people disagree with this solution to AI’s tendency to steal jobs because it promotes socialist ideals of basic income for everyone. When the pew research center asked American Adults how they felt about UBI 54% of people were opposed.

Many believe AI will save us and others believe it will finalize our obsolescence. Worries about our mass destruction are less realistic than these. AI is a danger yes, but it is also a way to advance technologically. Right now, we have control over AI, it’s our choice to decide where it goes and what it does.

Photo From: International Monetary Fund

Shaheen Brandt • Jun 29, 2024 at 7:38 pm

The Singularity is a future where AI and Humanity fuse to eliminate repetition and the mundane tasks that keep human beings from spending time more productively on enlightened goals. Your definition of some grade B movie interpretation of AI taking over humans is embarrassing and incorrect. With the Singularity, Humanity will no longer suffer the laborious.